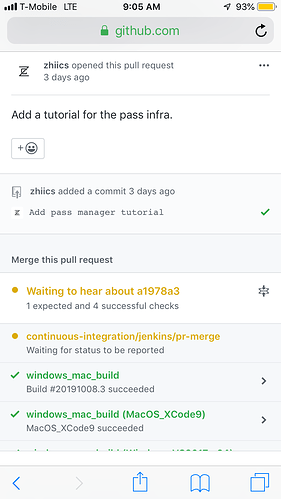

Hi, Just noticed that some PRs don’t appear to be running Ci checks: continuous-integration/jenkins/pr-merge , for example #4077 is merged doesn’t appear to have run that check.

Why do we have some PRs landing without these CI checks, what am I missing?

Cheers

/Marcus