Hi, I does not understand the curve feature a lot. Could you explain it simply? Thank you.

feature_type: str, optional

If is ‘itervar’, use features extracted from IterVar (loop variable).

If is ‘knob’, use flatten ConfigEntity directly.

If is ‘curve’, use sampled curve feature (relation feature).

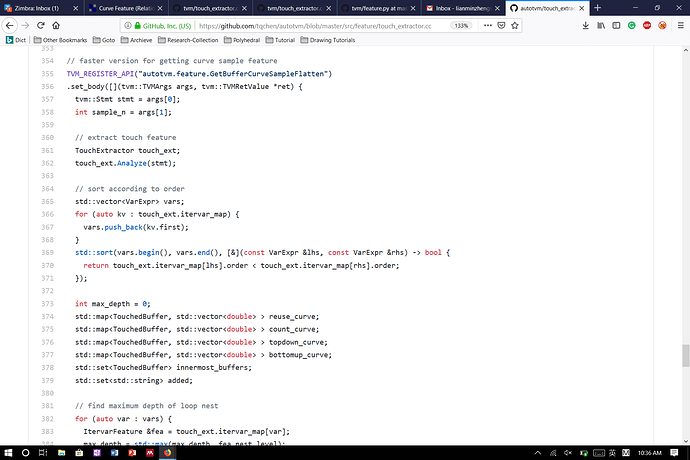

Hi @merrymercy, I noticed that the CurveSampleFeature extraction function parses the second argument (sample_n) as a bool, see here, while it should be an int, see here. As a result, we always take 1 sample, which is probably not the intention – or am I missing something?

Was this code used in the experiments in “learning to optimize tensor programs”? If so, how are the transfer learning results affected (if at all)?

If curve features do work as intended, could you give a hint as to why this representation is easier to transfer than “itervar” or “knob”, are the curve features not also of different dimensionality for different workloads? I guess I don’t completely understand how you turn these features into fixed-size vectors.

Thanks in advance!

Sorry for the late reply.

The bug you found is a fatal bug. It should be int. You can send a pull request to fix it.

Luckily, I checked my old experiment code in our old private repo

It was correct! (I had some unit tests when I first implemented it)

I refactored my code before the open source release. This bug was introduced at that time.

I am sorry that I didn’t test them thoroughly (i.e. re-run the experiments). But I am honest that the code used for the experiment is correct.

The curve feature is easier to transfer because it can produce fixed-size vectors for all AST structures. It samples a constant number (the parameter sample_n) of points from the curves and flattens the coordinates of them, so the size of feature vectors are fixed. For other feature types itervar and knob, they cannot guarantee fixed-size feature vectors for different workloads.

The curve feature is loop-number-invariant and captures the general tiling structure.

Great, glad to see that the paper is unaffected.

Thanks for the detailed explanation, I was under the impression that you’d somehow obtain the curve features for every node in the AST, but if I understand correctly they actually capture all the relations in the AST in one vector.

I guess this feature extraction code only exists on your fork, but do you recall what the additional features are for the simplified AST? You refer to these as length, the one-hot node type, buffer access pattern (topdown product, count and reuse).

Thanks for the detailed explanation, I was under the impression that you’d somehow obtain the curve features for every node in the AST, but if I understand correctly they actually capture all the relations in the AST in one vector.

Yes. For GBDT (XGBTuner), we always capture all the relations in one vector.

I guess this feature extraction code only exists on your fork, but do you recall what the additional features are for the simplified AST? You refer to these as length, the one-hot node type, buffer access pattern (topdown product, count and reuse).

Curve feature is for GBDT (XgboostTuner) only. Its code exists in the master branch.

In my branch, there is code for TreeGRU. For TreeGRU, we don’t use curve feature (relation feature). Instead, we use additional features for every AST node and feed the entire AST into a TreeGRU model. You are correct these features are length, one-hot node type, etc.

I think your confusion is mainly due to mixing up these feature types for GBDT and TreeGRU.

Hi, mercymercy. I tried both the ‘itervar’ and ‘curve’ feature for XGBoost Tuner in tutorial Auto-tuning a convolutional network for x86 CPU. It turned out that the length of extracted feature vector was invariant for ‘curve’ and also ‘itervar’. I supposed that different conv2d task generated varying length of ‘itervar’ feature based on my understanding. Did I miss something? Could you give me some hint? Any feedback will be appreciated.

curve feature |

itervar feature |

|

|---|---|---|

| conv2d with different shapes | same length | same length |

| conv2d and matmul | same length | different lengths |

| conv2d and softmax | different lengths | different lengths |