Introduction

Hello all. I have been interested in the TVM stack for a while. Especially I find the example of the VTA backend as a Unique Selling Point of TVM for me.

I might be wrong (correct me if I am wrong), but TVM is the only framework with an example of how to add an accelerator which does not have LLVM or other established compilation flow. So cudos from my side.

I looked into all TVM tutorials, but concentrated on understanding the VTA tutorials.

I thought to myself “I only need to know how to plug-in to TVM and then all will be fine”.

The tutorials are REALLY helpful to get a first understanding, but there are just some things that no matter how often I run the code I still dont understand.

So I have compiled a list of questions. Some are specific to the VTA and some are more TVM general. But in both cases I have mostly used the resnet.py example to come to these questions.

It is somewhat lengthy and therefore I apologize for the long read.

Questions

-

I was wondering about the graph which is read here. The format seems odd. NNVM is able to read many graphs from different frontends.

- How was this .json file generated and why wasn’t one of the supported frontends used?

- Why does the graph have many “non-standard” (all the clips, rshift, and casts) DL nodes?

My intuition tells me that it was necessary to check (assert?) if what is computed in the CPU+VTA architecture is the same as what is computed in the CPUonly case.

-

The functions defined in vta/graph.py according to my intuition are used to parse the graph, since nnvm cannot do it at this level.

- clean_cast seems to transform the json nodes into nnvm.symbols. Is this basically the “frontend parser” of the type of json description?

- _clean_cast What is this function doing? How is it doing it (code intuition since no comments are available) Why was it necessary?

- clean_conv What is this function doing? How is it doing it (code intuition since no comments are available) Why was it necessary?

- pack I understand that the conv2ds need to be in a certain layout for the VTA to process them so that’s fine, but why are max_pool2d calling _pack_batch_channel while global_avg_pool2d calls _unpack_batch_channel. Neither operations should be calculated on the accelerator of the VTA so why change their layouts? (i.e. if this is a common optimization why isn’t it part of the normal nnvm passes?)

-

After the graph has been parsed and the above functions have been called, the actual nnvm/tvm stack compilation flow is called. Obviously the vta.build_config is used which extended the tvm lowering passes by those required for communicating with the VTA runtime. In general this is clear to me, but some specifics are still not clear (for me).

-

In nnvm/compiler/build_module.py: build This function is supposed to be the high level graph optimization. What bothers me is more the way the code looks. The PruneCompute and OpFusion are part of the build function, while AlterOp, SimplifyInference and FoldScaleAxis are handled by the optimize function.

- These questions are more TVM general.

-

Why was such a distinction done?

-

Although optimize states “being target independent” it uses the with target: statement. Is there any target specific information needed for the optimize function?

-

Is there no way to add nnvm passes? (the lowering process allows user defined passes, but I don’t see anything similar here)

-

Does “PrecomputePrune” split the graph in two partitions? (i.e. the intersection is empty)

-

“GraphFuse” is actually not fusing operators. I get the same node size in the graph before and after, but after “GraphCompile” the nodes

are reduced. Is this always so? (I would have expected “GraphFindFusibleGroups” to identify what can be fused and then “GraphFuse” to actually fuse)

-

- These questions are more VTA specific.

-

Why was the compute rule for clip defined, since TOPi already has a clip operator?

-

In schedule_packed_conv the elementwise operations seem to be mapped to ALU opcodes of the VTA accelerator. This seems to work well for the example given, but I could construct a graph with some elementwise operation (right after the conv, like taking the abs) and this would not be mappable directly to an ALU opcode.

How was it guaranteed that only mappable elementwise operations were part of the fused operator? (my intuition is that this was given partially by the designers knowing which graph they need to parse and by some of the functions in vta/graph.py) -

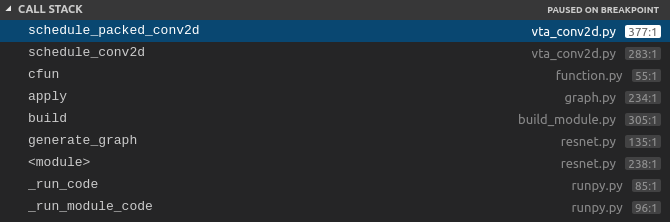

Also concerning schedule_packed_conv, when is this function actually called? (in the call stack I can see that “GraphCompile” calls it but I cant really pinpoint where exactly in the internal API it is being called)

- This question is more TVM general

- What are the level parameters in the compute and schedule registering actually used for and what is a good number?

-

- This question is more TVM general.

- What are the statements tvm.register_func(“tvm.info.memtvm.info.mem.%s % <some_string>”) actually doing? how is this information being used downstream in the compilation stack?

-

I think I understand the general concept of the lowering part.

While the conv2d schedule is constructed some pragmas are inserted. These are used by the functions inside vta/ir_pass.py. These are therefore the “VTA backend” (I know they only generate VTA runtime directive but still). These are added by the vta/build_module.py functions into the lowering phases of TVM tvm/build_config.py. Therefore at the end of “GraphCompile” we have llvm code for those operators which map to the ARM core and VTARuntime calls for those operators which are mapped to the VTA accelerator.

- Is this accurate? Am I missing something?

-

-

In general, I have had trouble to understand some of the execution of the code, due to the fact that python calls functions of the precompiled shared library.

-

What is the recommended setup to debug both at the Python API level and the internal C++ API level?

-

I seem to have problems accessing some internal variable of some objects when debugging. Can it be that objects which get internal variables in the C++ code dont update the dict ?

-